1. A Spiritual Leader Who Picked the Wrong Date

In 2011, radio broadcaster and self-styled biblical scholar Harold Camping announced with complete certainty that the Rapture and end of the world would happen on May 21. Thousands of followers prepared, believers paused normal life, and headlines around the world asked: “Is the world about to end?” When the date passed without incident, Camping said he was “flabbergasted” and looked for explanations.

This wasn’t a quiet prediction it was a full-scale, public act of confidence that didn’t pay off. It shows how powerful certainty can feel when delivered by someone with followers and media attention behind them. It also shows the human cost when faith in a prediction outweighs critical thinking. Stories like this intrigue because they reveal the limits of expert authority and the importance of healthy skepticism.

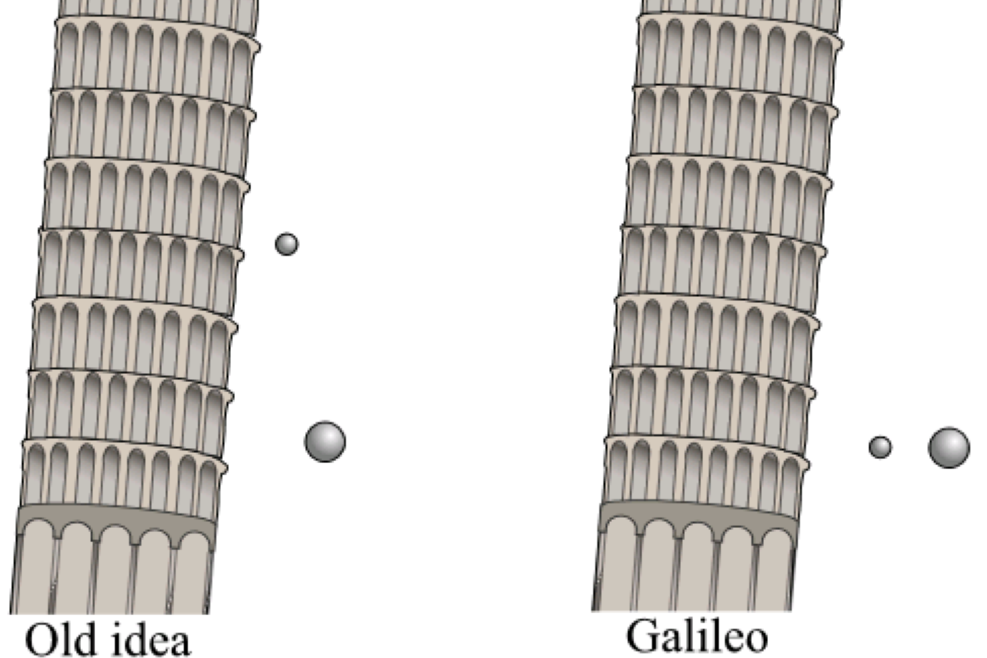

2. When “Heavier Things Fall Faster” Was Scientific Gospel

For nearly two millennia, scholars confidently taught that heavier objects fall faster than lighter ones. The logic seemed bulletproof: drop a rock and a feather, and the rock hits first. It wasn’t until the 17th century that Galileo challenged this deeply held belief and showed that without air resistance, objects fall at the same rate. This wasn’t a throwaway thought; it upended centuries of physics teaching and reminded us that even widely accepted “facts” can be rooted more in assumption than evidence. Experts of the time were certain, yet a simple experiment reshaped our understanding of motion.

It’s a great example for all of us who have ever been sure we’re right only to learn later that our confidence was a step ahead of our understanding. Science evolves, and sometimes we learn more from what we got wrong than what we got right. If this kind of story makes you curious about how knowledge changes over time, explore more of these “confidently incorrect” moments and see how they shaped the world.

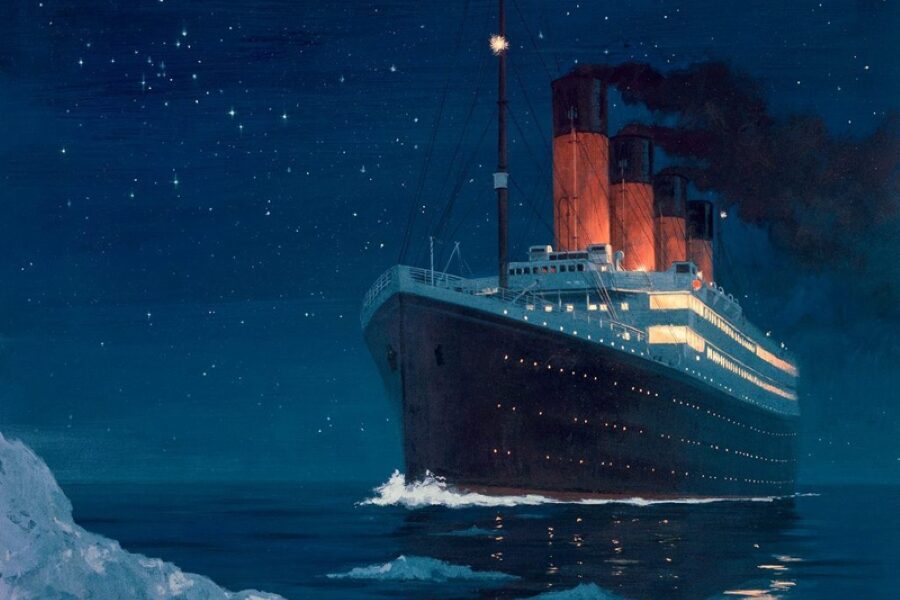

3. The Unsinkable Ship That Wasn’t

In 1912, when the RMS Titanic was built, engineers and commentators proclaimed it “unsinkable.” That confident label was everywhere, in ads, newspapers, and conversations among travelers, and it shaped people’s expectations as the ship set out across the Atlantic. But after hitting an iceberg, the Titanic sank in a matter of hours, with more than 1,500 lives lost. What seemed like expert assurance turned into a stark lesson in humility.

This moment still resonates because it reminds us that confidence, even from highly trained engineers, doesn’t guarantee foresight. We hear the word “unsinkable” and assume infallibility, but reality has a way of quietly proving certainty wrong. When we’re certain, it’s worth pausing to remember that possibility is stronger than prediction. Dive deeper into the stories behind expert errors you might rethink what you take for granted.

4. Doctors and Smoking: A Confidence Disaster

For decades in the early 20th century, doctors appeared in cigarette ads and publicly supported smoking, claiming it soothed throats and had health benefits. It was a time when medical authority carried enormous weight, so the confident endorsement of cigarettes influenced generations. The public believed it because doctors endorsed it and the media amplified those claims. It wasn’t until vast research linked smoking to lung cancer and heart disease that this medical consensus completely flipped.

Looking back, it’s almost surreal to realize that something we now understand as harmful was once medically encouraged. This tells us something essential about expertise: it’s shaped by evidence and by the limits of what’s known at the time. Confidence without full knowledge can lead entire societies astray. If that makes you think twice about expert claims today, you’re not alone skepticism and curiosity are good companions on the path to understanding.

5. A Famous Economist Who Missed the Internet Boom

In 1998, Nobel-winning economist Paul Krugman confidently predicted that by 2005, the Internet’s impact on the economy would be no greater than that of the fax machine. At the time, the Internet was still emerging, and many experts struggled to project its long-term influence. But within just a few years, online business, communication, and technology had reshaped almost every aspect of life, from shopping and work to social connections far beyond fax machines ever did.

This one sticks with us because it shows that even brilliant minds can greatly underestimate change. It’s not that Krugman didn’t understand economics he just didn’t foresee how deeply accessible connectivity would transform markets and behavior. It’s a humbling reminder that assumptions about the future are always tentative. If you find these large-scale misses as fascinating as I do, stick with this series, there’s more to explore about how experts can be both insightful and unexpectedly wrong.

6. The Blockbuster That Flopped in Everyone’s Eyes

In 1997, studio executives were sure Batman & Robin would be a massive hit. After all, Batman was a cultural icon, and the previous movies had performed well. Critics even expected it to dominate the box office. But when the movie premiered, audiences reacted with dismay. Its campy dialogue, over-the-top costumes, and uneven plot made it one of the most infamous superhero flops of all time. Despite expert marketing strategies and fan anticipation, reality didn’t match confidence.

It’s a gentle reminder that prediction isn’t certainty. Even when data, trends, and experience point one way, human taste can defy expectations. Sometimes, what experts consider foolproof simply doesn’t connect with audiences. For all of us who have ever been sure something would succeed only to watch it stumble, it’s both humbling and oddly comforting.

7. The Challenger Launch Nobody Saw Coming

In 1986, NASA was confident that the Space Shuttle Challenger was safe for launch, even after engineers raised concerns about the O-rings in cold weather. The morning of January 28, despite warnings, the shuttle lifted off. Tragically, 73 seconds later, it exploded, claiming the lives of all seven astronauts aboard. Experts’ confidence in the system overshadowed the visible risks, and their assumptions about past success proved tragically flawed.

The lesson extends beyond aerospace: confidence is not a substitute for caution. It shows how organizational pressure and overreliance on prior performance can cloud judgment, even among highly trained professionals. By studying these mistakes, we gain insight into human nature, risk management, and the importance of questioning assumptions.

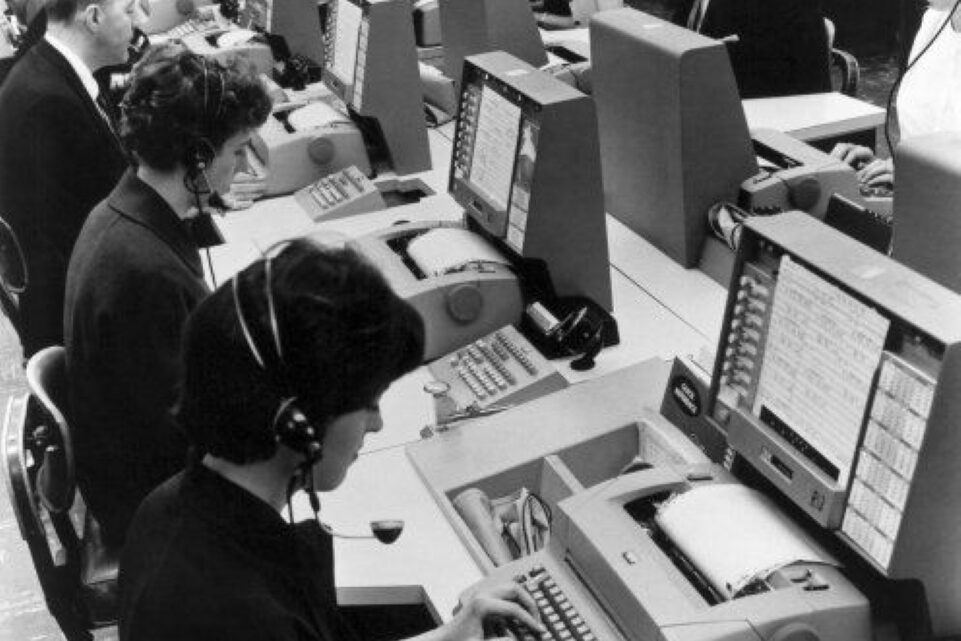

8. When Computers Were Supposed to Replace Humans

In the 1960s, some industry leaders confidently predicted that computers would fully replace human workers in offices by the 1980s. They imagined a world of automated paperwork, payrolls, and even managerial tasks. While computers did revolutionize work, they didn’t replace humans completely. Instead, they changed how tasks were done, required new skill sets, and created jobs that hadn’t existed before. The expert forecasts underestimated the adaptability of both people and technology.

This teaches an important point: predictions about technology often reflect hope or fear more than actual capability. Being certain about the pace of innovation can be misleading because humans and machines evolve together. It’s a useful reminder that enthusiasm must be balanced with patience and observation.

9. The Flu That Didn’t Kill Millions

In 1976, health experts feared a new swine flu outbreak in the United States would spark a deadly pandemic. The government launched a nationwide vaccination program, confident it was necessary to prevent millions of deaths. But the virus turned out to be far less dangerous than expected, causing very few cases. Moreover, some vaccinated individuals experienced side effects, which created public backlash and controversy. Experts were sure they were saving lives, yet the outcome revealed just how tricky predicting epidemics can be.

The story reminds us that even life-saving intentions can collide with uncertainty. It underscores the challenge of decision-making under pressure and the limits of knowledge in rapidly evolving situations. For readers, it’s another example of how confident predictions don’t always match reality.

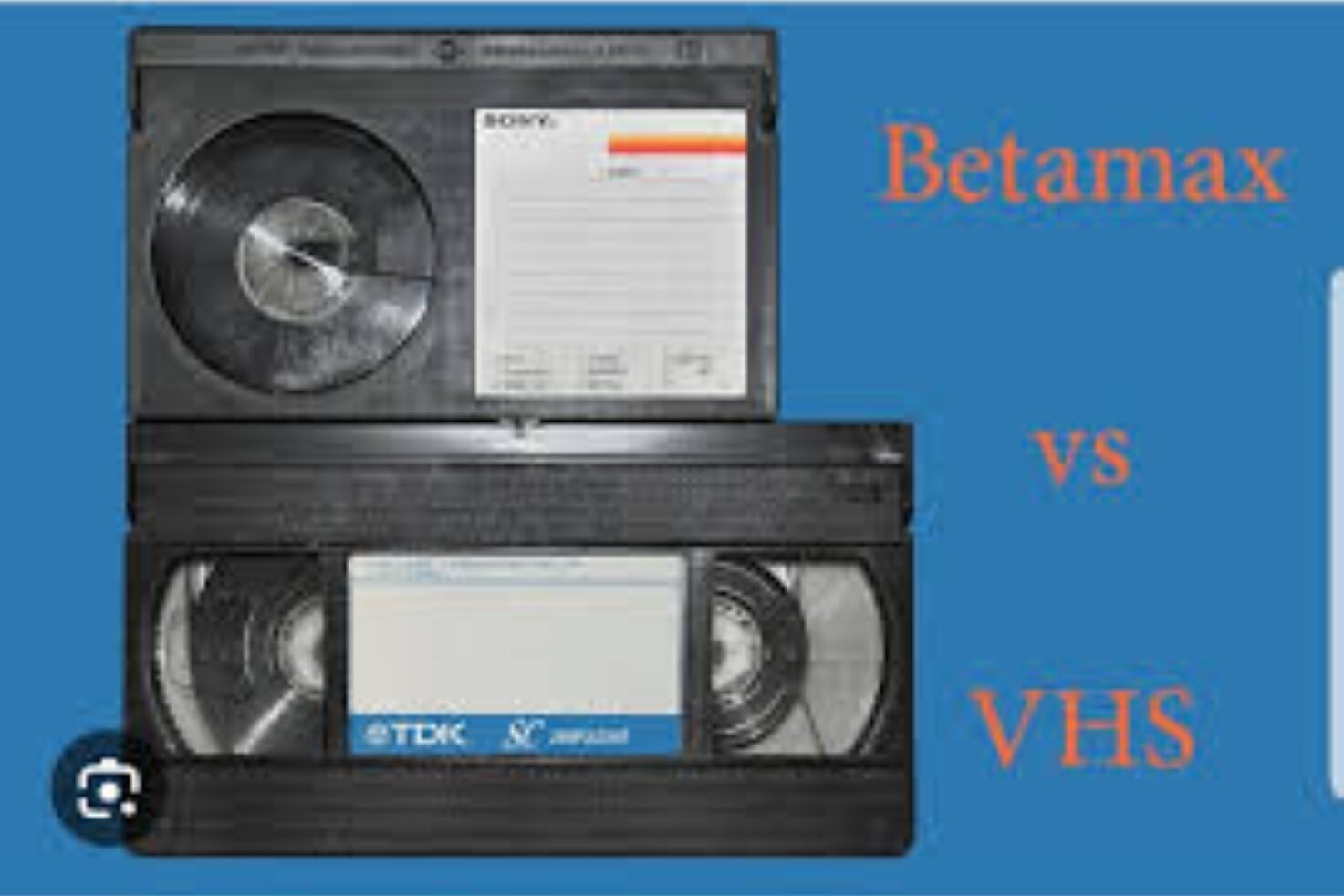

10. The Betamax That Lost to VHS

In the late 1970s, Sony experts confidently believed Betamax would dominate home video because of its superior quality and reliability. They predicted VHS, a competing format with longer recording times but lower picture quality, would never catch on. Yet, by the mid-1980s, VHS had become the industry standard, and Betamax gradually disappeared from shelves. This wasn’t a minor miscalculation; it was a market-wide overturning of expert confidence.

It’s a lesson in humility: technical superiority doesn’t always equal success. Market behavior, convenience, and user preference often outweigh expert projections. These stories show that even top professionals can misjudge human priorities, reminding us to take confident predictions with a grain of salt.

11. The Computer That Couldn’t Speak Human

In the 1960s, many AI experts confidently claimed that machines would soon be able to hold human-like conversations. Early predictions suggested that by the 1970s, computers would converse naturally and understand language as well as humans. Programs like ELIZA could mimic conversation, but real understanding remained out of reach for decades. Experts underestimated the complexity of human language, context, and meaning.

The lesson here is simple: confidence in an exciting idea can outpace reality. Technology evolves, but human behavior, nuance, and context often defy neat predictions. Those early AI misfires remind us that curiosity and patience matter more than certainty. For anyone watching modern AI breakthroughs, it’s a gentle reminder to balance enthusiasm with realism, and to celebrate small progress even when the big picture still takes time to unfold.

12. The Stock Market Crash Nobody Saw

In the 1920s, leading financial experts confidently claimed that the stock market was on a permanent upswing. Economists and brokers assured the public that investing in stocks was virtually risk-free. When the market crashed in 1929, millions lost life savings, businesses failed, and the Great Depression followed. Experts had overlooked the fragile credit systems and speculative bubbles building up under their feet.

It’s a story that keeps financial advisors humble. Even well-trained professionals can miss systemic risks when confidence blinds them. For everyday readers, it’s a reminder to question assumptions, diversify choices, and prepare for uncertainty. The stock market is full of cautionary tales that show how expert certainty can crumble in complex systems.

13. The Population Bomb That Didn’t Explode

In 1968, biologist Paul Ehrlich published The Population Bomb, warning that overpopulation would lead to mass starvation by the 1970s and 1980s. Experts agreed, predicting dire global food crises. Yet, advances in agriculture, technology, and policy prevented the widespread famine they feared. While overpopulation remains a concern, the scale of disaster was far less severe than initially predicted.

This teaches us that predictions about society are particularly tricky. Human ingenuity, adaptability, and unexpected innovations often defy dire forecasts. Confidence in such predictions can oversimplify a complex world. These moments remind us that while experts offer guidance, the future is rarely set in stone, and flexibility is as important as foresight.

14. The Dinosaur Experts Who Got a Tail Wrong

In the early 20th century, paleontologists were confident that dinosaurs dragged their tails on the ground like giant lizards. Museum exhibits, textbooks, and scientific papers depicted them that way. But fossil evidence and modern biomechanics have since shown that many dinosaurs held their tails off the ground for balance and movement. Experts’ confidence was based on the best observations of the time but lacked the context that later discoveries provided.

It’s a charming reminder that even in science, what seems obvious may not be true. The way experts draw conclusions can change dramatically with new evidence. For curious readers, it’s reassuring to know that questioning and re-examining assumptions is part of the process, and learning from mistakes is how knowledge grows.

15. The Cold War Missile Misread

During the Cold War, military analysts confidently predicted that the Soviets had more nuclear weapons than they actually did. Reconnaissance errors, faulty intelligence, and assumptions about Soviet capabilities led to heightened tensions and costly arms races. Experts were certain that over-preparing was safer than underestimating, yet the actual numbers told a different story.

This shows how confidence in one’s expertise, combined with incomplete information, can escalate fear and drive expensive, risky decisions. The lesson extends beyond geopolitics: it’s a reminder to check data, question assumptions, and accept that even professionals can misread complex situations. Humility in expert analysis is as important as skill and knowledge.

16. The Microwave That Experts Thought Was Dangerous

When microwaves first became available for home use in the 1960s, many appliance experts confidently warned that they would cause serious health problems, including radiation poisoning and cancer. Magazines, trade journals, and even some doctors reinforced these fears, and public hesitation slowed adoption. Yet decades of research have shown that microwaves, when used properly, are safe, convenient, and incredibly effective for everyday cooking.

It’s a fascinating example of how fear can masquerade as expert certainty. Confidence is persuasive, but sometimes it’s based on imagination rather than evidence. The microwave’s story reminds us that innovation often meets resistance from those who assume the worst. Even the smartest voices can misread risks.

17. The World Wide Web Will Fizzle Out

In 1995, Wired magazine editor Clifford Stoll confidently predicted that the World Wide Web would remain a niche tool for academics and hobbyists and would never become a major part of daily life. He imagined the Internet as a fad, unlikely to replace newspapers, TV, or in-person interactions. History proved him wrong today; the Web shapes everything from commerce and social connection to education and entertainment.

This reminds us that even informed opinions can underestimate the scale and speed of innovation. Experts are human too, prone to projecting the limits of their own experience onto the future. The story encourages curiosity over certainty, showing that breakthroughs often arrive in ways we cannot fully anticipate.

18. The Hoverboard That Never Hovered

In the 1980s, pop culture and tech enthusiasts confidently predicted that hoverboards would appear in everyday life by the early 2000s, inspired by movies and futuristic visions. Engineers and inventors promised levitating skateboards that would glide effortlessly over streets, and magazines treated it as an imminent reality. Yet decades later, hoverboards as seen in fiction remain largely science fiction, with only limited experimental models or magnetically assisted versions available.

This story reminds us that cultural enthusiasm can make experts feel certain about what the future will look like. Confidence often mixes with imagination, and even skilled engineers can overestimate how fast technology will develop. It’s a playful yet instructive example that encourages curiosity and patience: the future rarely unfolds exactly as imagined, and what seems inevitable today might take years or never to arrive. For anyone who has been captivated by a “sure thing” that didn’t happen, this is a familiar lesson in humility and possibility.