1. What Happens When AI Has to Choose Who Lives

Imagine you’re behind the wheel of a car that drives itself. Sounds pretty cool until you consider a scenario where the car has to make a split‑second choice between hitting a group of pedestrians or swerving and risking your life. That’s the ethical puzzle researchers and engineers are wrestling with today. According to ethical studies on autonomous vehicles, these “trolley problem”‑style questions aren’t just academic anymore; they’ve become concrete programming challenges for AI that could be on roads within a few years.

In thoughtful discussions about AI ethics, experts highlight that programming a car to decide between harms isn’t simply about logic; it’s about values, cultural contexts, and fairness. One study notes that while many people feel a car that minimizes overall casualties is the most ethical choice, those same people are often uncomfortable buying a car that could sacrifice their safety to protect others. This contradiction highlights a deep tension between societal good and personal choice. Ultimately, as cars gain more autonomy, how they weigh lives and who gets to decide those rules will shape public trust and adoption. If we want safer roads and less human error, these ethical choices need honest, transparent conversation now.

2. When Safety Claims Meet Real‑World Incidents

Comfort and trust go hand in hand, and when we talk about self‑driving cars, safety is the first thing most Americans care about. Companies like Waymo report significant progress: billions of miles driven by autonomous cars with a claimed strong safety record, suggesting that they can prevent serious crashes more often than humans.

But the real world isn’t perfect. Recent incidents involving autonomous vehicles have raised eyebrows among safety advocates and regulators. Some crashes involve unexpected behavior from AI systems or rare edge‑case scenarios that challenge even the best sensors and algorithms. These moments remind us that while autonomous vehicles could dramatically reduce crashes overall, they aren’t foolproof and can struggle with unpredictable human behavior on the road. The mixed bag of safety claims and real incidents reinforces that AI on wheels must be both technically reliable and ethically grounded. Seeing solid safety numbers is reassuring, but understanding how decisions are made and where responsibility lies is equally critical for public trust.

3. Public Fears and the Human Edge in Driving

Even if the tech works perfectly, human feelings about control matter a lot. Some people are excited about letting AI take the wheel, dreaming about traffic that flows smoothly and stress‑free commutes. Others feel uneasy, admitting that handing over control to a machine even one claimed to be safer feels strange or downright scary.

That emotional uneasiness isn’t trivial. Designers and ethicists note that people often fear what they don’t fully understand, and autonomous decision‑making hits right at that fear. The idea that a machine must make choices in split‑second danger scenarios feels personal and abstract at the same time. Addressing these emotions means more than improving technology it means listening to concerns, explaining how decisions are made, and building systems where people feel they still have dignity and agency. After all, trust isn’t built by perfect logic alone; it’s earned through understanding and respect.

4. Regulation and the Rules of the Road

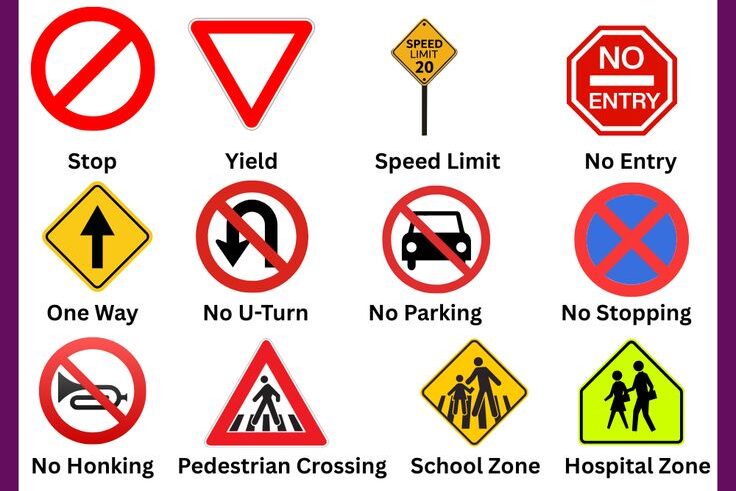

Cars don’t just exist on streets; they exist within laws. As autonomous vehicles become more capable, lawmakers and regulators are scratching their heads over how to govern machines that drive themselves. Ethical AI experts from institutions around the world emphasize that existing rules of the road were built for human drivers, not algorithms making judgment calls in microseconds.

This challenge goes beyond safety tests and emissions standards. It touches on liability (who’s at fault in a crash?), transparency (can we understand how decisions were made?), and ethical frameworks that guide behavior when harm can’t be avoided. Some frameworks suggest adhering to principles that prioritize overall harm reduction, while others argue for defaulting to protecting the occupant first. Whatever the approach, regulators will play a huge role in shaping the standards that autonomous cars must meet before they can become widespread. These rules won’t just affect engineers and lawmakers they’ll shape the everyday experience and peace of mind of every driver and passenger.

5. Tech Promise Meets Moral Reality

At the heart of this conversation is a hopeful idea: autonomous vehicles could save countless lives by removing human error, the leading cause of crashes today. Research and deployment efforts show that AI can be safer than the average human driver under many conditions.

But technology doesn’t operate in a vacuum, and neither do ethics. Building cars that make tough judgments isn’t just a programming problem, it’s a human values problem. As we’ve looked at how preferences vary, how safety claims clash with real incidents, and how public trust is shaped, one thing becomes clear: self‑driving vehicles must be more than technically proficient; they must reflect a society’s shared values. The future of roads depends not just on better sensors and software, but on thoughtful, inclusive dialogue about what we value and how we want our machines to behave in the moments that matter most. Join the conversation, your perspective could help shape how these decisions are made, making our roads safer and our technology more human.

6. Learning from Crashes That Weren’t Supposed to Happen

When self‑driving cars make headlines for crashes, the headlines can make it seem like the technology is broken. But researchers and safety advocates see a deeper lesson, pointing out that even human drivers make errors all the time. According to analysis of collision reports, autonomous systems often struggle in unpredictable situations like heavy rain, construction zones, or when other drivers behave erratically. These scenarios were always difficult for machines to interpret in real time. One engineer quoted in a safety report said, “Edge cases are not rare if you live in a world where humans do unexpected things.” That means companies developing these vehicles are constantly refining their AI to better understand and react to the messy reality of traffic.

At the same time, every crash involving an autonomous car becomes a learning opportunity. Unlike human drivers, AI systems can record vast amounts of data about what happened before, during, and after an event. This data can help engineers and regulators understand why a near‑miss occurred and how to prevent similar situations in the future. For families and commuters who want safer roads, this ongoing improvement process is reassuring, but it also highlights how much work remains before AI becomes a reliable everyday driver. The more we learn from real world events, the better these systems can become.

7. What Ethical Transparency Means for Riders

One of the biggest concerns people have about autonomous cars is not just that AI makes choices, but that we do not always know how it makes them. When a person drives, you can ask them why they did something. When an algorithm drives, that question becomes much harder to answer. Ethical transparency means designing systems that can explain decisions in terms people can understand. Experts say that without this transparency, trust will always lag behind the technology. A transportation ethicist recently noted, “If riders cannot see how a decision was made, they may assume the worst.”

This goes beyond technical jargon and into how information is shared with everyday users. For example, if a car slows unexpectedly or moves to avoid an obstacle, a simple explanation on a dashboard screen could help riders understand why the vehicle behaved that way. In the long run, such transparency could influence public acceptance, insurance policies, and how regulators evaluate safety standards. For everyday travelers, knowing that a self‑driving system can justify its choices in plain language may make the difference between feeling nervous and feeling comfortable. This human connection to machine decision‑making is a subtle but important piece of the trust puzzle.

8. Who’s Responsible When an AI Decides?

One question that shows up again and again is about liability. If a self‑driving car causes a crash, who is at fault? The person sitting in the driver’s seat, the software developer, or the company that built the vehicle? This question is not hypothetical. Legal scholars and lawmakers are actively debating how to assign responsibility in crashes involving autonomous systems. Some argue that companies should accept responsibility because they control the AI, while others say that humans still need to remain engaged and therefore share liability. A legal expert summed it up this way: “Assigning fault for automated driving events requires a new framework that current traffic laws were not designed to handle.”

This ongoing conversation matters because liability shapes how products are developed, tested, and insured. From a commuter’s perspective, clarity here means knowing whether you are financially and legally protected when something goes wrong. Insurance companies are already adjusting their models to account for autonomous features, and lawmakers are drafting guidelines that could become national standards. While there is no universal solution yet, this debate reflects a broader truth: technology that shares control with humans also shares responsibility in complex ways. Keeping these discussions public and clear helps people feel grounded in what lies ahead.

9. Balancing Local Laws with Global Tech

While many autonomous vehicle companies are based in the United States or China, cars will eventually cross state and national borders. That means a vehicle’s AI must obey not only physical laws of the road but also legal rules that vary from place to place. For instance, speed limits, right‑of‑way laws, and even ethical expectations can differ between states. A self‑driving car that performs perfectly in one region might encounter conflicting rules in another. A transportation policy review explains, “Regulatory alignment is essential if autonomous vehicles are to operate safely and predictably across jurisdictions.”

This patchwork of laws creates both challenge and opportunity. Harmonized regulations could make deployment easier nationwide, while divergent standards might slow adoption or force companies to tailor software for each region. For everyday drivers, this means that the promise of seamless self‑driving travel across the country may take longer to arrive than the promise of urban pilots. States that lead with clear standards could become centers for innovation, while others watch and learn. In the meantime, citizens and officials alike are discovering how much collaboration between engineers, lawyers, and policymakers will shape the roads of tomorrow.

10. How Public Voice Can Shape Tomorrow’s Roads

Summing up everything we’ve talked about, one thing stands out clearly: the future of autonomous vehicles isn’t just a technological story, it’s a human one. People’s hopes, fears, expectations, and values help shape the questions we ask about safety, ethics, and responsibility. Recent public surveys show that Americans may support self‑driving cars in principle, but they want clear rules, trustworthy explanations, and accountability when things go wrong. These are not technical demands, they are human ones.

When we talk about whether a car should “refuse to save your life,” we are really asking what we value as a society and how we want our technology to reflect that. AI can be programmed in many ways, but which of those ways feels right to us? That choice does not belong to engineers alone. It belongs to everyone who will share the road, buy the car, or ride in its seat. If we want a future where autonomous travel is safe, just, and widely accepted, then being part of the conversation matters. Share your thoughts, ask questions of policymakers, and stay informed. Your voice is part of what makes tomorrow’s roads truly ours.