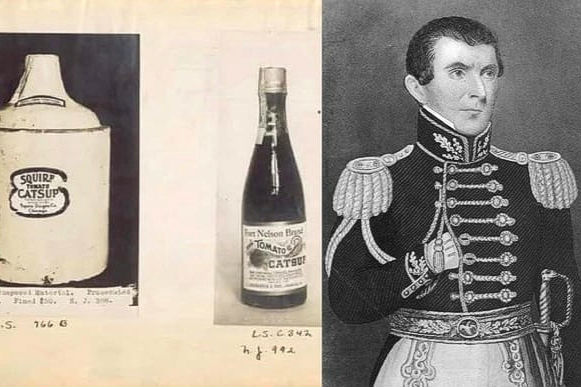

Ketchup As Medicine

In the early nineteenth century, ketchup was not the popular condiment we casually squeeze onto burgers or fries today. Instead, it was strictly marketed as a powerful pharmaceutical. In 1834, an American physician named Dr. John Cook Bennett began promoting tomato-based ketchup as a universal cure for various digestive ailments, including indigestion, rheumatism, and even diarrhea. This was a radical shift, as tomatoes had previously been viewed with great suspicion in the United States, with many people believing they were actually poisonous or “deadly nightshades.”

Dr. Bennett’s influence was so significant that pharmacists began producing concentrated “tomato pills” to treat patients. These pills were sold across the country as a miraculous health tonic throughout the 1830s and 1840s. However, as the mid-nineteenth century approached, the medical claims began to lose credibility due to a lack of scientific evidence and the rise of fraudulent “cure-all” salesmen. By the time Henry Heinz launched his famous standardized recipe in 1876, ketchup had fully transitioned from the medicine cabinet to the dinner table. Today, it remains a household staple, though its origins as a prescribed stomach remedy are a largely forgotten chapter of medical history.

Waffle Iron Innovation

The humble waffle iron is a classic fixture of leisurely Sunday breakfasts, but its role in industrial history is surprisingly revolutionary. In the early 1970s, legendary University of Oregon track coach Bill Bowerman was searching for a way to give his runners more grip without the heavy weight of traditional metal spikes. During a morning meal in 1971, he found inspiration in the grid pattern of his wife’s waffle iron. He realized that an inverted version of that pattern, molded into rubber, could create a lightweight, multi-surface sole that provided incredible traction.

Bowerman’s experimentation was literal; he actually poured liquid urethane into his family’s waffle iron, which unfortunately ruined the kitchen appliance but birthed a sporting empire. This “Waffle Sole” became the foundation for the first Nike sneakers, officially hitting the market in 1974. The design was a massive success, helping to launch the modern running boom by making athletic footwear more versatile and comfortable. It is a striking example of how a simple kitchen tool can spark a multi-billion-dollar industry. To this day, the waffle-inspired tread remains a signature feature of many athletic shoes, proving that great ideas can come from the most domestic of settings.

Bread And Social Class

Bread has been a dietary staple for millennia, but its appearance on the table once signaled a person’s precise rank in society. During the Middle Ages and through the eighteenth century in Europe, white bread was an elite luxury item. Because the refining process required intensive labor to remove the coarse bran and germ, pure white flour was expensive and difficult to produce. Consequently, the aristocracy viewed soft, pale loaves as a sign of purity and wealth, while the working class subsisted on “brown” or “black” bread made from unrefined grains like rye or barley.

This social hierarchy regarding bread remained dominant until the late twentieth century when nutritional science fundamentally changed public perception. By the 1970s and 1980s, health experts began highlighting the essential fiber and vitamins found in whole grains, which were stripped away during the refining of white flour. Suddenly, the “peasant food” of the past became the premium choice for health-conscious consumers, while mass-produced white bread became associated with cheap, processed diets. This dramatic reversal shows how scientific discovery can flip social status on its head. Today, the choice between white and whole-wheat bread is driven more by health preferences than by the size of one’s bank account.

Tea Bags By Accident

Tea bags are an essential part of modern life, yet their global success was entirely unplanned. In 1908, a New York tea merchant named Thomas Sullivan began sending samples of his various tea blends to customers in small, hand-sewn silk pouches. Sullivan intended for the customers to cut the bags open and pour the loose leaves into a traditional teapot. However, many customers found the silk bags so convenient that they simply dropped the entire pouch into hot water. They inadvertently discovered that the tea brewed perfectly well inside the bag without the mess of loose leaves.

The convenience of this “accident” quickly turned into a commercial demand. By the 1920s, manufacturers moved away from expensive silk and began using gauze, and later, heat-sealed paper fibers to make the process more affordable for the general public. During the 1930s and 1940s, the tea bag became a lifeline for busy households, particularly in the United States and the United Kingdom. What started as a clever marketing packaging trick for samples ended up completely disrupting thousands of years of traditional tea-drinking culture. Today, billions of tea bags are used every year, all because a few early twentieth-century customers misunderstood how to use a promotional sample.

Mason Jars Repurposed

Before the luxury of modern supermarkets and electric refrigerators, Mason jars were vital tools for human survival. Patented by John Landis Mason in 1858, these glass jars featured a threaded neck and a metal screw-on lid that created an airtight seal. This invention was a game-changer for families in the nineteenth and early twentieth centuries, as it allowed them to “put up” or preserve fruits, vegetables, and meats during the harvest season to survive the harsh winter months. Without this technology, food spoilage was a constant threat to rural health.

In the mid-twentieth century, as home refrigeration and frozen foods became standard, the practical necessity of canning began to fade. However, the Mason jar did not disappear; it simply changed its identity. By the early 2000s, the jars experienced a massive resurgence as a “shabby chic” aesthetic icon. They are now commonly used as cocktail glasses, wedding centerpieces, and even rustic bathroom organizers. This transition from a rugged survival necessity to a trendy lifestyle accessory reflects a shift in how we value the past. While most modern users will never need to preserve a year’s worth of peaches, the enduring design of the Mason jar remains a symbol of domestic stability and timeless style.

Meat Juice Presses

In the Victorian era, the kitchen often doubled as a mini-hospital, and the meat juice press was one of its most important medical tools. During the late 1800s, it was widely believed by the medical community that the “essence” of meat contained vital life-giving properties. To extract this liquid, specialized heavy iron or silver presses were used to squeeze the raw or lightly seared juices out of beef. This concentrated “meat juice” was then fed to infants, the elderly, or patients suffering from wasting diseases like tuberculosis to help them regain their strength.

The most famous of these devices was the Valentine’s Meat Juice press, which gained immense popularity following its use during the illness of high-profile figures in the 1870s. However, as germ theory and modern nutrition advanced in the early 1900s, doctors realized that raw meat juices could actually carry dangerous bacteria and that the nutritional value was not as miraculous as once thought. By the mid-twentieth century, these bulky, metallic devices were rendered obsolete by vitamin supplements and better food safety standards. Today, meat juice presses are primarily found in museums, serving as a fascinating reminder of a time when the line between a chef and a doctor was incredibly thin.

Pastry Tools Replaced

For generations of home bakers, the pastry blender was a mandatory tool for achieving the perfect pie crust. This handheld device, consisting of several parallel U-shaped wires attached to a handle, allowed a cook to “cut” cold butter or lard into dry flour without the heat from their hands melting the fat. This technique was essential for creating the flaky layers that defined high-quality pastry throughout the early 1900s. In an era before automated appliances, the pastry blender was a prized possession in every kitchen drawer.

The decline of this tool began with the introduction of the electric food processor in the 1970s. These machines could achieve the same “cutting in” effect in seconds, whereas the manual method required several minutes of physical effort. As more households prioritized speed and convenience, traditional hand tools like the pastry blender were often relegated to the back of the cupboard. Despite this, many professional pastry chefs still prefer the manual tool today because it prevents over-mixing and provides a better texture than high-speed blades. This evolution highlights a common theme in household history: while technology can make a task faster, it doesn’t always make the final result better, leaving a niche for old-school tools to survive.

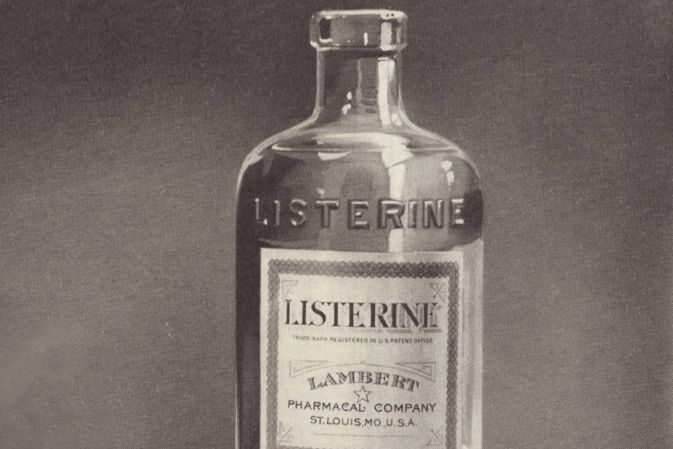

Listerine’s First Purpose

Listerine is a household name for oral hygiene, but its original intended use had nothing to do with fresh breath. Formulated in 1879 by Dr. Joseph Lawrence and Jordan Wheat Lambert, it was named after Sir Joseph Lister, the pioneer of antiseptic surgery. For the first several decades of its existence, Listerine was a powerful surgical antiseptic used to clean wounds and sterilize operating theaters. It was even marketed as a concentrated floor cleaner and a remedy for gonorrhea and smelly feet in the late nineteenth century.

The transformation into a mouthwash didn’t happen until the 1920s, when the company launched a brilliant, albeit aggressive, marketing campaign. They coined the term “halitosis”, a Latin-sounding word for bad breath, to make a common condition seem like a serious medical disorder. By framing bad breath as a social deal-breaker that could prevent marriage or career success, the company successfully shifted Listerine from a harsh hospital chemical to a daily bathroom essential. This rebranding was so effective that by the mid-twentieth century, the product’s origins as a floor scrub were almost entirely erased from public memory. It remains a classic case study in how marketing can completely redefine a product’s purpose.

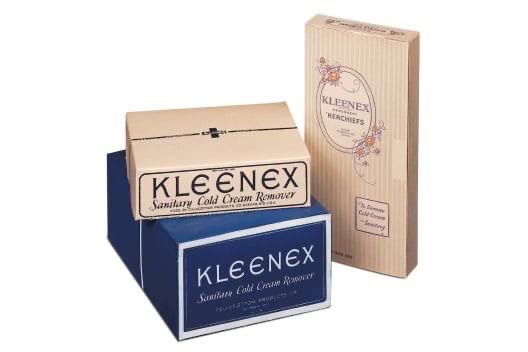

Kleenex’s Original Role

When Kleenex was first introduced to the public in 1924, it was never meant to be used for blowing noses. Kimberly-Clark originally marketed the product as a high-end beauty accessory called “Kleenex Kerchiefs.” These disposable sheets were designed for women to use as a hygienic way to remove cold cream and heavy makeup, which was a major trend in 1920s skincare routines. The goal was to provide a disposable alternative to cloth towels that would otherwise become stained with oils and pigments.

However, the company soon noticed a strange trend in customer feedback. Many people were writing in to say they were using the soft tissues to blow their noses during bouts of hay fever or the flu, finding them much more sanitary than carrying around a germ-filled cloth handkerchief. Recognizing a massive opportunity, the company shifted its advertising strategy in 1930 to promote Kleenex as “the handkerchief you can throw away.” Sales doubled almost immediately. By the mid-1930s, Kleenex had transitioned from a niche vanity item to a universal household necessity. This shift proves that customers often know more about a product’s true potential than the engineers who actually created it.

Wristwatches For both Gender

Today, wristwatches are worn by everyone, but for most of history, they were considered strictly feminine “wristlets.” In the nineteenth century, a man would never be caught wearing a watch on his arm, as it was seen as a piece of delicate jewelry, much like a bracelet. Men carried pocket watches, which were larger, more rugged, and considered a symbol of masculine authority and punctuality. The idea of a “watch on a string” for men was actually mocked in newspapers as being dainty and impractical for serious work.

This perception was shattered by the brutal reality of the First World War (1914–1918). In the trenches, soldiers found it impossible to fumble with a pocket watch while holding a rifle or checking a map. They began soldering lugs onto small pocket watches and strapping them to their wrists with leather bands to coordinate “creeping barrages” and synchronized attacks. After the war ended, these “trench watches” became a symbol of heroism and ruggedness. By the 1920s, the fashion had completely flipped, and wristwatches became the standard for men worldwide. This transformation shows how the necessity of the battlefield can redefine gendered fashion norms and turn a decorative accessory into a vital survival tool.

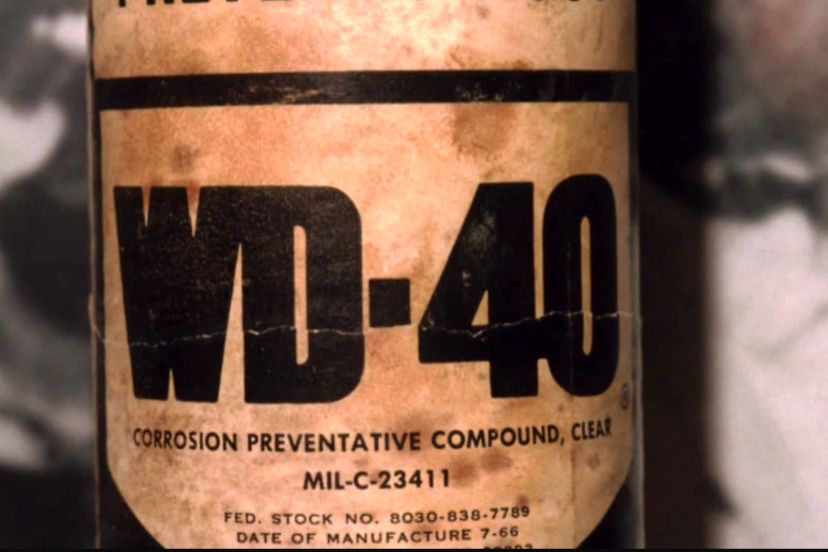

WD-40’s Cold War Start

WD-40 is a staple in modern garages and junk drawers, yet its origins are tied to the high-stakes world of aerospace engineering during the Cold War. Developed in 1953 by the Rocket Chemical Company in San Diego, California, it was never intended to fix a squeaky door hinge or a stiff bicycle chain. Instead, it was specifically engineered to act as a “water displacement” spray to prevent rust and corrosion on the outer skin of the Atlas Missile. The secret formula was the result of intense experimentation by chemist Iver Norman Lawson, who finally perfected the mixture on his 40th attempt, leading to the name “WD-40.”

The product remained a specialized industrial tool for several years until workers at the aerospace plants began smuggling cans home to use on their own personal projects and household repairs. Recognizing a massive commercial opportunity, the company’s president, John S. Barry, decided to package the spray in aerosol cans for the general public in 1958. By the 1960s, it had become a household name, evolving from a top-secret military chemical into a universal solution for nearly every mechanical problem. Its journey from protecting nuclear missiles to cleaning kitchen tiles is a classic example of how military innovation often trickles down to improve everyday civilian life.

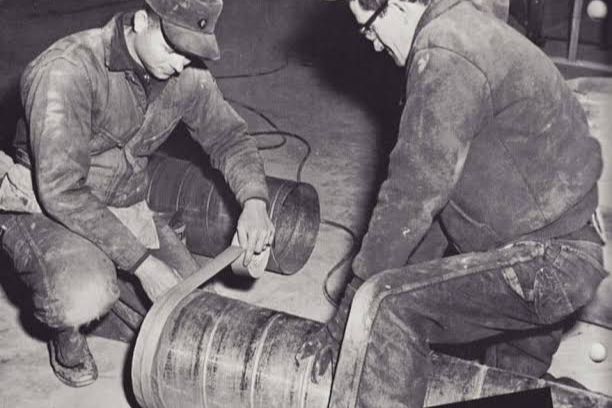

Duct Tape For War

Duct tape is often viewed as the ultimate quick-fix tool for home repairs, but its history began on the battlefields of World War II. In 1943, a female ordnance factory worker named Vesta Stoudt realized that the thin paper tape used to seal ammunition boxes was difficult for soldiers to open quickly under fire and wasn’t waterproof. She wrote a letter to President Franklin D. Roosevelt suggesting a strong, fabric-based waterproof tape that could be torn by hand. The idea was approved, and Johnson & Johnson developed a durable adhesive tape made from cotton duck cloth, which earned it the nickname “Duck Tape.”

After the war ended in 1945, the housing boom in the United States created a new demand for the product in the construction industry. Builders realized the tape was perfect for sealing joints in heating and air conditioning ducts, leading to its name being changed to “Duct Tape” and its color shifting from military green to the familiar metallic silver. By the 1950s and 1960s, it had fully transitioned from a wartime necessity to a DIY essential. Today, it remains one of the most versatile items in any household, proving that a simple suggestion from a concerned factory worker can change the way the entire world fixes things.

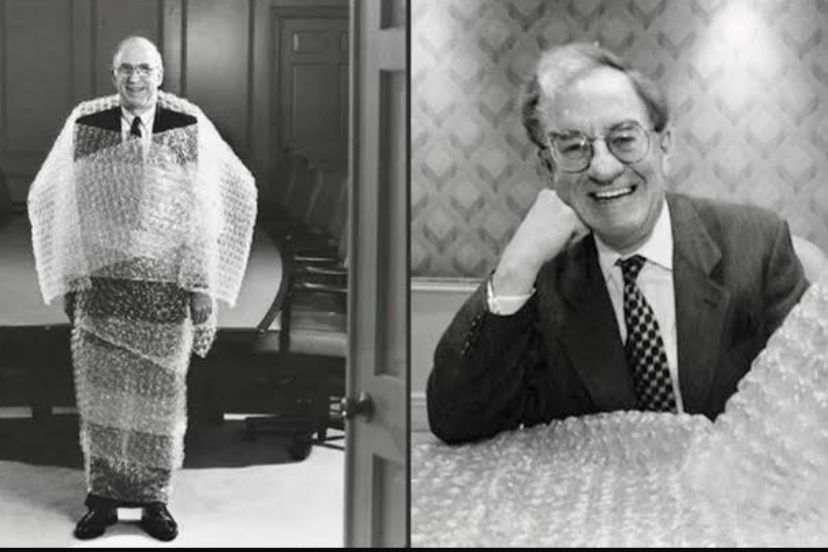

Bubble Wrap’s Failed Debut

Bubble wrap is the go-to material for shipping fragile goods, but its inventors originally had a much more stylish, and unusual purpose in mind. In 1957, engineers Alfred Fielding and Marc Chavannes were attempting to create a new type of three-dimensional, textured wallpaper by sealing two plastic shower curtains together with air trapped inside. They believed the unique look would appeal to the “Space Age” aesthetic of the late 1950s. However, the public found the plastic, bubbly walls to be strange and impractical, leading to a complete failure in the interior design market.

Undeterred, the inventors tried to market the material as greenhouse insulation, but that also failed to gain traction. The breakthrough finally happened in 1960 when a marketing professional at their company, Sealed Air, realized that the air-filled bubbles provided perfect cushioning for delicate electronics. IBM became their first major customer, using the wrap to protect their new 1401 computers during shipping. This pivot saved the product from obscurity and turned it into an international multi-billion-dollar success. It is a striking reminder that even a “failed” invention can become a global necessity if you can just figure out the right problem for it to solve.

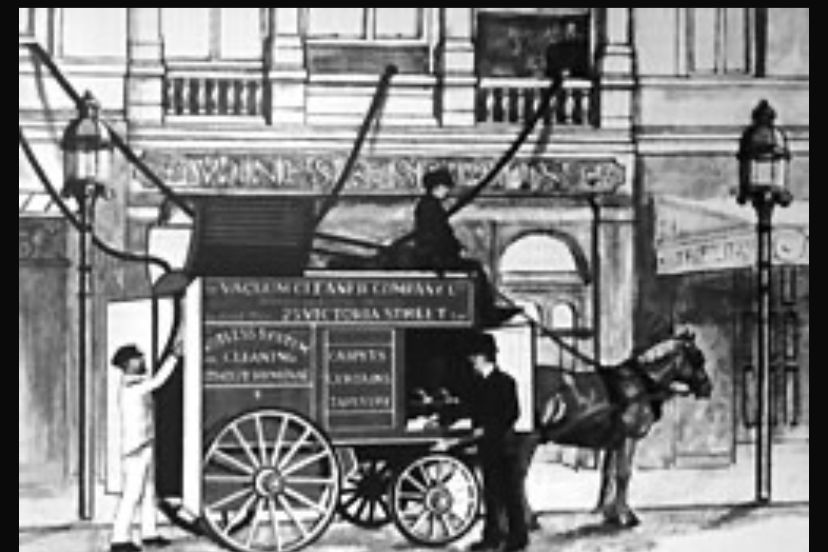

Early Vacuum Services

The vacuum cleaner is now a compact, battery-powered appliance we use daily, but its earliest ancestors were massive, horse-drawn machines that required a professional crew to operate. In 1901, British engineer Hubert Cecil Booth invented the “Puffing Billy,” a large internal combustion engine mounted on a carriage. Since the machine was far too large to fit through a front door, it stayed parked in the street while long, snake-like hoses were fed through the windows of the home. It was an expensive, loud, and public spectacle that only the wealthiest families could afford to hire for special occasions.

Because these early services were so costly and dramatic, vacuuming was seen as a luxury service rather than a standard chore for decades. It wasn’t until 1907 that an American janitor named James Murray Spangler created a portable electric version using a fan, a pillowcase, and a broom handle, which he eventually sold to the Hoover family. Throughout the 1920s and 1930s, the technology was refined to become smaller and more affordable, eventually moving into the average home. This shift from a heavy industrial service to a lightweight household tool reflects the broader 20th-century trend of making professional technology accessible to everyone for daily maintenance.

Flat Irons Before Electricity

In the centuries before the electric grid reached modern homes, maintaining crisp, wrinkle-free clothing was a dangerous and exhausting physical labor. Households relied on “sad irons”, the word “sad” meaning heavy or solid in old English, which were thick slabs of cast iron with a handle. These tools had to be heated directly on top of a coal stove or over an open flame. To keep a constant heat, a person usually needed at least two irons: one to use on the clothes while the other sat on the stove reheating. It was a delicate balance of timing and temperature.

The work was not only tiring but also risky, as an iron that was too hot would instantly scorch a white shirt, and an iron that was too cool would fail to remove the creases. This changed in the late 19th and early 20th centuries with the introduction of the first electric irons, patented by Henry Seely White in 1882. As electricity became common in the 1920s, ironing became a much safer and faster task. Today, these heavy antique irons are rarely used for their original purpose and are instead found in antique shops, serving as decorative doorstops or bookends. They serve as a powerful reminder of how much physical effort was once required for even the simplest grooming tasks.

Staplers Then And Now

Staplers are a quiet, reliable presence in modern offices, but their history began as a handcrafted luxury for the elite. One of the earliest recorded stapling devices was custom-made for King Louis XV of France in the mid-18th century. Each staple was allegedly inscribed with the royal court’s insignia, and the machine itself was an ornate, manual tool that could only handle one document at a time. For over a century, “paper fasteners” remained rare, expensive, and difficult to use, often requiring a hammer or a heavy lever to punch a single metal clip through a few sheets of paper.

It wasn’t until the late 19th century, specifically with the patent of the McGill Single-Stroke Staple Press in 1879, that the technology began to move toward the office desk. However, even these early models were frustrating because they had to be reloaded after every single use. The true revolution occurred in the 1920s and 1930s when companies like Swingline developed the “staple strip,” allowing dozens of staples to be loaded at once. This minor change in design made the stapler a global household and business essential. What was once a slow, royal luxury is now a cheap, mass-produced tool that we use thousands of times without a second thought.

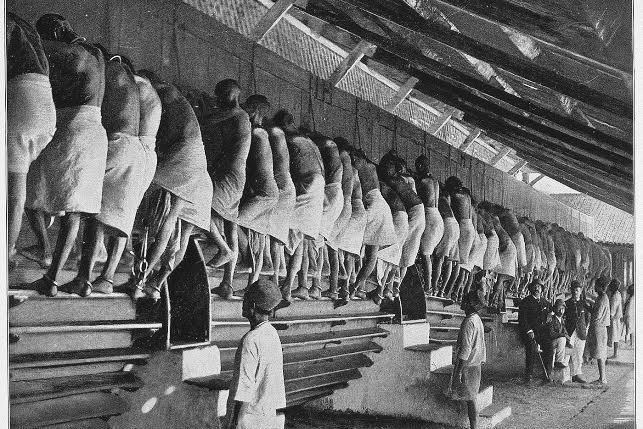

Treadmills As Punishment

The treadmill is a central feature of modern gyms, but for much of the 19th century, it was a feared symbol of the British prison system. Invented in 1818 by engineer Sir William Cubitt, the “penal treadmill” was a massive, rotating wooden cylinder with steps that prisoners were forced to climb for up to six hours a day. The goal was twofold: to crush the spirits of the inmates through grueling, repetitive labor and to occasionally grind grain or pump water. Prisoners would often climb the equivalent of several thousand feet in a single shift, leading to exhaustion and frequent injuries.

By the late 1800s, social reformers began to speak out against the cruelty of this practice, and it was eventually banned in the United Kingdom under the Prisons Act of 1898. The treadmill disappeared for decades until it was “reinvented” as a fitness machine in the late 1960s. Dr. Kenneth Cooper and mechanical engineer William Staub marketed the first home treadmill, the PaceMaster 600, as a way to improve cardiovascular health. It is a remarkable historical irony that a device originally designed to be a form of torture has become a voluntary activity that people now pay monthly subscriptions to access in the pursuit of wellness.

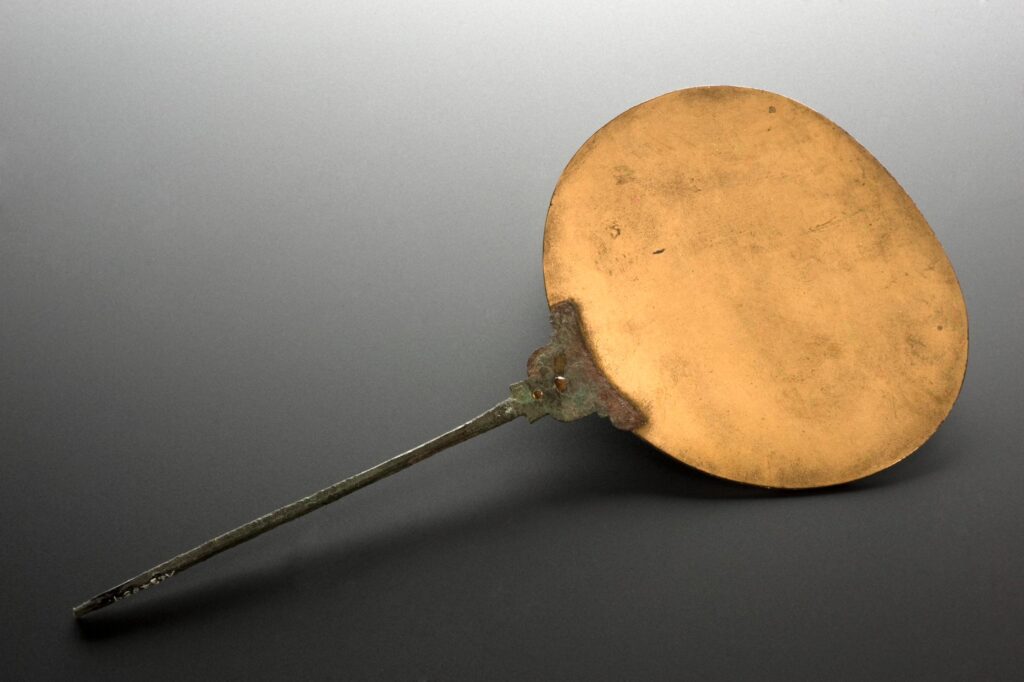

Mirrors As Luxury

Mirrors are so common today that we rarely think about them, but for most of human history, they were one of the rarest and most expensive objects a person could own. In ancient civilizations like Egypt and Rome, mirrors were made of highly polished bronze or silver, which produced a dark, distorted reflection and tarnished easily. Because they were handcrafted from precious metals, they were symbols of immense wealth and were often buried with royalty. Clear glass mirrors were virtually unknown until the 13th century, and even then, they were small and prohibitively expensive for anyone outside of the nobility.

The secret to high-quality mirror making was closely guarded by the glassmakers of Venice, Italy, for centuries. In the 1600s, a large mirror could cost as much as a naval ship or a country estate. It wasn’t until the mid-19th century, specifically with the invention of the “silvering” process by German chemist Justus von Liebig in 1835, that mirrors could be mass-produced safely and cheaply. This technological leap allowed mirrors to move from the halls of palaces into the bathrooms and bedrooms of ordinary citizens. Today, they are ubiquitous household items, yet they represent a long journey from ancient status symbols to modern daily tools.

Reusable Medical Syringes

In the modern world, medical syringes are strictly single-use items made of plastic, but this was not always the case. Prior to the 1950s, syringes were durable tools crafted from heavy glass and chrome-plated metal. Because they were expensive to manufacture, they were designed to be used hundreds of times across many different patients. Between every use, the syringe had to be disassembled, scrubbed by hand, and boiled in a sterilizer to kill bacteria and viruses. This was a time-consuming process that left a dangerous margin for human error and accidental cross-contamination.

The shift toward the disposable world began in 1949 with the invention of the first plastic, disposable syringe by Australian inventor Charles Rothauser. However, it took another decade for the technology to become cheap enough for mass adoption. The introduction of the “Monoject” disposable syringe in 1961 changed medicine forever, virtually eliminating the risk of spreading blood-borne diseases like hepatitis between patients. This evolution marks a significant turning point in public health history, where the priority shifted from the durability of the tool to the absolute safety of the patient. While glass syringes are now only found in museums, they represent an era when medical care required much more manual labor and specialized cleaning.

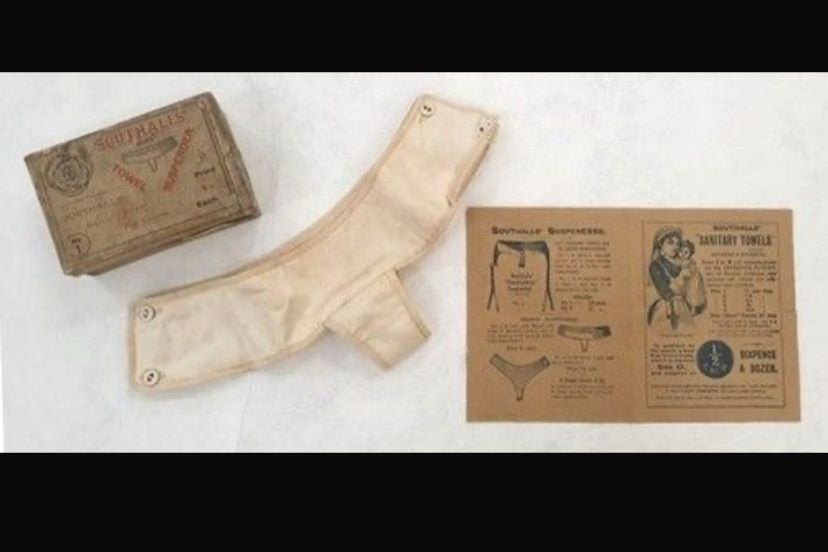

Sanitary Pads Evolve

Sanitary products have undergone a radical transformation in both design and social acceptance over the last century. In the late 1800s and early 1900s, “disposable” products were almost non-existent; most women used layers of knitted or woven cloth that had to be washed and reused, a practice that was both labor-intensive and difficult to manage while traveling or working. The first commercial disposable pads, such as “Lister’s Towels” released by Johnson & Johnson in 1896, actually failed because women were too embarrassed to ask for them by name at a store counter.

The real change occurred after World War I, when nurses noticed that the cellulose bandages used for wounded soldiers were more absorbent than cloth. This led to the creation of Kotex in 1920, but the pads still had to be worn with awkward belts and safety pins. It wasn’t until 1969 that the first “stay-free” pad with an adhesive strip was introduced, finally removing the need for bulky harnesses. This innovation provided women with unprecedented mobility and comfort, reflecting a broader social shift toward women’s rights and bodily autonomy. Today, these products are essential household items, but their evolution shows how much progress has been made in both engineering and breaking down social stigmas.